Why (and How) I Built a Go AI SDK

GoAI, a Go (Golang) LLM library: 22+ providers, 2 dependencies, type-safe generics. v0.6.1, Go 1.25+. I built it to learn Go by adding AI to infrastructure that already runs on Go.

The Go AI SDK landscape

Python has LangChain, LlamaIndex, LiteLLM. TypeScript has the Vercel AI SDK. Go has options, but none covered all the bases.

What I found

| Library | Stars | Created | Providers | What's missing |

|---|---|---|---|---|

| go-openai | ~10.6k | Aug 2020 | 1 | Single provider only |

| Eino (ByteDance) | ~10.5k | Dec 2024 | 10 | Graph framework, different scope |

| LangChainGo | ~9k | Feb 2023 | ~14 | 170+ deps, no MCP, no generics schema |

| Google ADK Go | ~7.5k | May 2025 | Gemini-first | Agent framework, Gemini-optimized |

| Genkit Go (Google) | ~5.7k* | 2024 | ~6 | Google Cloud-heavy, 129 deps |

| openai-go | ~3.1k | Jul 2024 | 1+Azure | Single provider by design |

| anthropic-sdk-go | ~960 | Jul 2024 | 1+Bedrock | Single provider by design |

| gollm | ~570 | Jul 2024 | 6 | Limited tool calling, limited streaming |

| Jetify AI | ~230 | May 2025 | 2 | Early stage, 2 providers |

| instructor-go | ~200 | May 2024 | 4 | Structured output only |

| GoAI | new | Mar 2026 | 22+ | 2 deps, this post |

*Genkit stars shared across JS, Go, and Python.

The gaps I kept running into:

No

SchemaFrom[T]: generate JSON Schema from Go structs using generics. Only Genkit Go has this, but pulls in 129 dependenciesNo built-in MCP: Model Context Protocol connects to external tool servers (filesystem, GitHub, databases, Kubernetes APIs). For infra and edge use-cases, this is how agents interact with surrounding systems

No provider-defined tools: OpenAI has web search, code interpreter, file search. Anthropic has computer use, bash, text editor, web fetch, code execution. Google has Google Search, URL context, code execution. None of the Go LLM libraries expose these

No prompt caching: Anthropic and OpenAI support cache control to reduce cost and latency

No streaming structured output:

StreamObject[T]that progressively populates a Go struct as JSON arrivesDependency weight: a library that handles API keys to every major AI provider is a high-value target. Fewer dependencies, smaller attack surface

Why I built GoAI

1. To learn Go. Not a Go expert. Built this to learn by solving a real problem. PRs welcome.

2. To learn AI-assisted development. Designed by me, built with Claude Code. I'll write a separate post on the workflow, what worked, and what didn't.

3. To build a foundation for agent orchestration. Lightweight AI agents in CI/CD, Kubernetes, CLI, edge. GoAI is the foundation layer.

What I learned from the Vercel AI SDK

The Vercel AI SDK is the reference. GoAI's API surface is directly inspired by it:

goai.GenerateText(ctx, model, opts...)

goai.StreamText(ctx, model, opts...)

goai.GenerateObject[T](ctx, model, opts...)

goai.StreamObject[T](ctx, model, opts...)

goai.Embed(ctx, model, value, opts...)

goai.EmbedMany(ctx, model, values, opts...)

goai.GenerateImage(ctx, model, opts...)

What I took from Vercel:

Unified API surface across all providers

Minimal provider interface, 2-3 methods per provider, SDK handles the rest

Tool loop with MaxSteps, model calls tool, execute, feed back, repeat

Streaming-first

Retry with exponential backoff and Retry-After header awareness

Go features that solved real problems

Go generics for type-safe LLM output. GenerateObject[T]() returns ObjectResult[T] where .Object is T, not interface{}. SchemaFrom[T]() walks the struct via reflect to generate JSON Schema, with cycle detection, embedded struct flattening, and nullable pointer fields. No schema files, no codegen. Docs.

type Recipe struct {

Name string `json:"name"`

Ingredients []string `json:"ingredients"`

Steps []string `json:"steps"`

}

result, err := goai.GenerateObject[Recipe](ctx, model,

goai.WithPrompt("A simple pasta recipe"),

)

// result.Object is Recipe, fully typed

Functional options for composable configuration. WithX() pattern gives composability and extensibility without breaking changes. Separate option types (Option vs ImageOption) so the compiler catches misuse. Options are composable via WithOptions(). Docs.

result, err := goai.GenerateText(ctx, model,

goai.WithSystem("You are a helpful assistant"),

goai.WithPrompt("Hello"),

goai.WithMaxSteps(3),

goai.WithTools(searchTool, calculatorTool),

goai.WithMaxRetries(2),

goai.WithOnResponse(func(info goai.ResponseInfo) {

log.Printf("latency=%v tokens=%d", info.Latency, info.Usage.TotalTokens)

}),

)

Interfaces for provider abstraction. Three interfaces (LanguageModel, EmbeddingModel, ImageModel), implicitly satisfied. 22+ providers conform independently. Adding a provider never touches core. Docs.

Goroutines and channels for streaming. Background goroutine consumes the provider stream (SSE for most providers, binary EventStream for Bedrock, NDJSON for Gemini). Callers read from <-chan string. All functions are context-aware, retries respect ctx.Done(). Tool loops execute in parallel with bounded concurrency via semaphores. Docs.

stream, _ := goai.StreamText(ctx, model, goai.WithPrompt("Tell me a story"))

for text := range stream.TextStream() {

fmt.Print(text)

}

if err := stream.Err(); err != nil {

log.Fatal(err)

}

sync.Map and sync.Once. Schema generation cached in sync.Map by type. Stream consumption uses sync.Once to start the internal goroutine exactly once.

errors.As for cross-provider errors. APIError and ContextOverflowError defined once, every provider wraps into these. errors.As(err, &apiErr) works through any wrapping depth. Docs.

internal/ for encapsulation. The openaicompat codec lives in internal/, shared across 13+ providers but invisible to users.

Fewer dependencies, smaller attack surface

GoAI's core module has 2 dependencies:

Direct:

golang.org/x/oauth2Indirect:

cloud.google.com/go/compute/metadata

No HTTP frameworks, no JSON schema libraries, no third-party provider SDKs. Raw HTTP calls, parse responses directly.

This was a design choice from day one (mid-March 2026). For context on why this matters:

LiteLLM (Python, 95M monthly downloads): compromised, malicious versions harvested API keys and cloud credentials. 40,000+ downloads in 40 minutes.

Axios (npm, 100M+ weekly downloads): compromised by a North Korean state actor, cross-platform RAT via fake dependency.

A multi-provider AI SDK concentrates API keys for every provider. Every dependency is an attack vector.

GoAI core: 2 dependencies

Eino: 37 dependencies

instructor-go: 41 dependencies

Genkit Go: 129 dependencies

LangChainGo: 170+ dependencies

Both Langfuse and OpenTelemetry live in separate go.mod submodules. Go's go.sum checksum verification and GOPROXY transparency log help too.

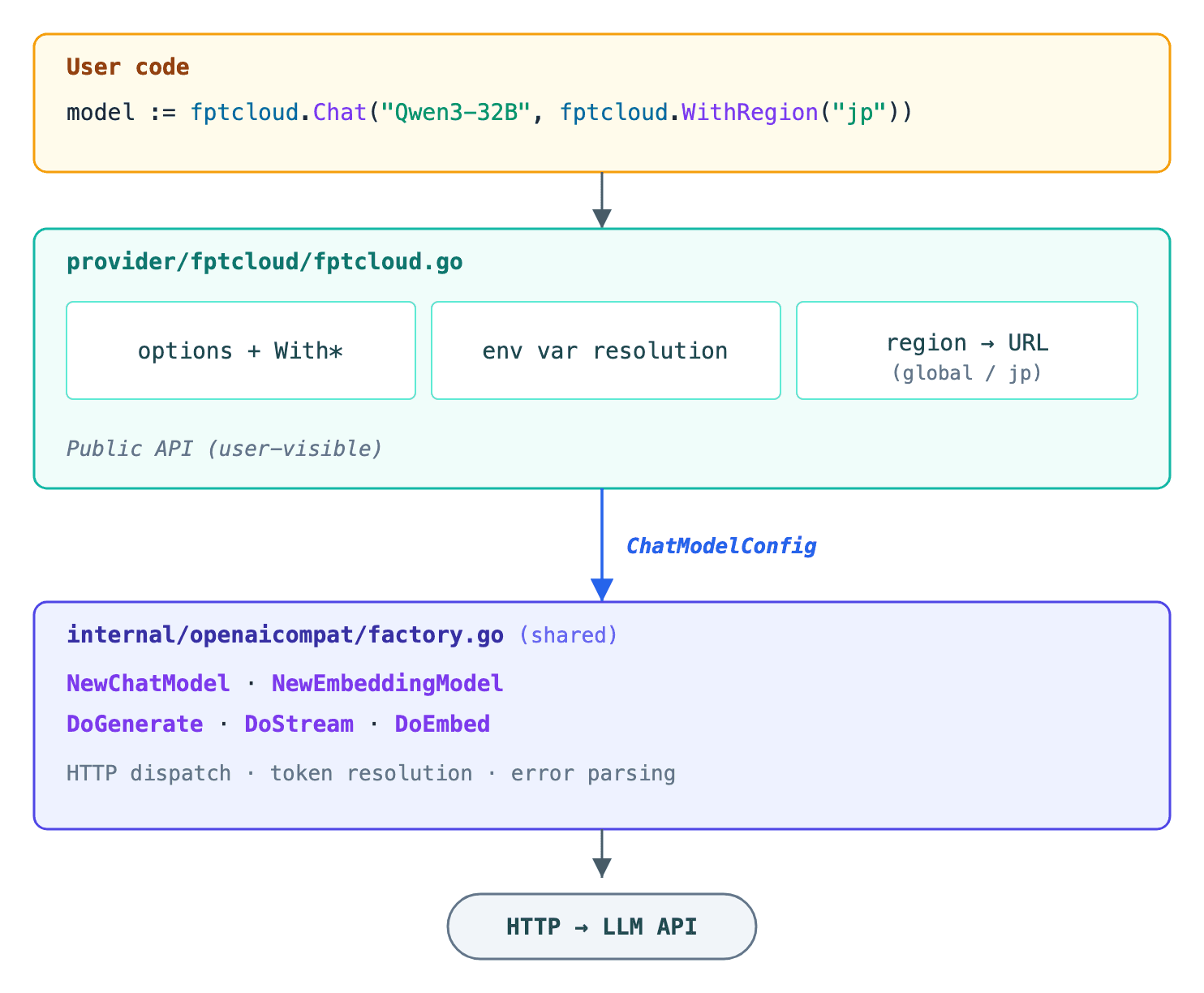

Architecture

Three layers:

User-facing API (

generate.go,object.go,embed.go,image.go): seven functions, options parsing, retry, caching, tool loops, hooks. Context-aware. Multimodal input (PartImage,PartFile). Token usage tracking. Docs.Provider interface (

provider/provider.go): three interfaces, minimal surface, easy to mock. Docs.Provider implementations (

provider/openai/,provider/anthropic/, etc.): 22+ providers, separate packages. Import only what you use.

type LanguageModel interface {

ModelID() string

DoGenerate(ctx context.Context, params GenerateParams) (*GenerateResult, error)

DoStream(ctx context.Context, params GenerateParams) (*StreamResult, error)

}

type EmbeddingModel interface {

ModelID() string

DoEmbed(ctx context.Context, values []string, params EmbedParams) (*EmbedResult, error)

MaxValuesPerCall() int

}

type ImageModel interface {

ModelID() string

DoGenerate(ctx context.Context, params ImageParams) (*ImageResult, error)

}

Providers implement DoGenerate and DoStream. GoAI handles retries, caching, tool execution, streaming, hooks.

The shared codec

13+ providers share a single codec in internal/openaicompat/ (BuildRequest, ParseStream, ParseResponse). Providers with unique wire formats (Anthropic Messages API, Google Gemini REST, AWS Bedrock Converse, Cohere Chat v2) have their own implementations. Azure, vLLM, and MiniMax delegate through existing providers. Docs.

Tool calling

Two kinds: user-defined (you write Execute with json.RawMessage input) and provider-defined (runs on provider infrastructure). User-defined tools execute in parallel between steps. Docs.

Provider-defined tools

Providers expose built-in tools (web search, code execution, computer use). Each returns a provider.ToolDefinition that you wrap into goai.Tool. Docs.

// Get the provider tool definition

def := anthropic.Tools.WebSearch(anthropic.WithMaxUses(5))

// Wrap into goai.Tool (provider-defined tools have no Execute func)

tools := []goai.Tool{{

Name: def.Name,

ProviderDefinedType: def.ProviderDefinedType,

ProviderDefinedOptions: def.ProviderDefinedOptions,

}}

result, _ := goai.GenerateText(ctx, model,

goai.WithPrompt("Search for Go AI libraries"),

goai.WithTools(tools...),

)

Available: Anthropic (10: Computer, Bash, TextEditor, WebSearch, WebFetch, CodeExecution + versioned variants), OpenAI (4: WebSearch, CodeInterpreter, FileSearch, ImageGeneration), Google (3: GoogleSearch, URLContext, CodeExecution), xAI (2), Groq (1).

Go MCP client

Built-in MCP client. Docs.

transport := mcp.NewStdioTransport("npx", []string{"@modelcontextprotocol/server-filesystem", "/tmp"})

client := mcp.NewClient("myapp", "1.0", mcp.WithTransport(transport))

client.Connect(ctx)

defer client.Close()

mcpTools, _ := client.ListTools(ctx, nil)

tools := mcp.ConvertTools(client, mcpTools.Tools)

result, _ := goai.GenerateText(ctx, model,

goai.WithPrompt("List files in /tmp"),

goai.WithTools(tools...),

goai.WithMaxSteps(3),

)

Stdio, SSE, and HTTP transports.

Observability

Langfuse: trace-based observability, token counting, error tracking (separate

go.mod, contributed by @oscarbc96 in #24)OpenTelemetry: distributed tracing and metrics (separate

go.mod)

Both hook into OnRequest, OnResponse, OnToolCall, OnToolCallStart, OnStepFinish.

Also supported:

Prompt caching via

WithPromptCaching(), implements arxiv 2601.06007v2: cache system prompts only (41-80% cost, 13-31% latency savings for agentic workloads). Cache token tracking normalized across Anthropic, OpenAI, Google, BedrockReasoning tokens, supported for models that expose them

Citations,

result.Sourcesfor providers that return source annotationsAuto-batched embeddings,

EmbedManywith bounded parallelismWithToolChoice,WithTimeout,WithProviderOptionsToolCallIDFromContextfor execution tracingcompatprovider for any OpenAI-compatible endpoint90%+ test coverage with mock HTTP servers

Getting started

go get github.com/zendev-sh/goai

model := openai.Chat("gpt-4o")

result, _ := goai.GenerateText(ctx, model,

goai.WithPrompt("Explain Go interfaces in 3 sentences"),

)

fmt.Println(result.Text)

Switch providers, same code:

model := anthropic.Chat("claude-sonnet-4-20250514")

model := google.Chat("gemini-2.0-flash")

model := bedrock.Chat("anthropic.claude-sonnet-4-20250514-v1:0")

model := ollama.Chat("llama3")

model := compat.Chat("my-model", compat.WithBaseURL("https://my-api.com/v1"))

More: structured output, streaming, tool calling, MCP, embeddings, image generation.

Benchmarks

Apple M2, 3 runs, in-process mock servers, identical SSE fixtures (50KB payload). Source.

| Metric | GoAI | Vercel AI SDK | Delta |

|---|---|---|---|

| Cold start | 569us | 13.89ms | 24x |

| Time to first chunk | 320us | 412us | 1.3x |

| Streaming throughput | 1.46ms/op | 1.62ms/op | 1.1x |

| GenerateText | 55.7us/op | 79.0us/op | 1.4x |

| Memory (1 stream) | 220KB | 676KB | 3x less |

| Schema generation | 3.6us/op | 3.5us/op | ~parity |

What's next

First commit was March 18. 18 releases in 3 weeks, now at v0.6.1. The SDK covers the provider layer: unified API, streaming, tool calling, structured output, MCP, observability. Next up is an agent orchestration layer on top of GoAI for CI/CD, Kubernetes, and CLI workflows.